AI has moved quickly from experimentation to expectation. Many organisations now have AI tools embedded across sales, service, marketing, and operations. Chatbots are live. Predictive models are running. Generative AI is drafting responses, summaries, and insights.

Yet despite this progress, a common sentiment keeps surfacing at the leadership level:

“We’ve invested in AI, but we’re not seeing the impact we expected.”

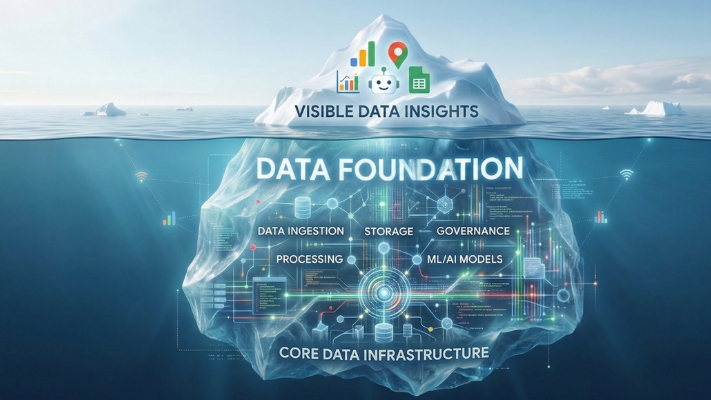

In most cases, the issue is not the AI itself.

It’s the data underneath it.

AI does not fix weak foundations. It exposes them.

The Illusion of AI Progress

Modern AI platforms are increasingly easy to deploy. Vendors promise faster time to value, minimal setup, and immediate productivity gains. This creates the impression that AI maturity is driven by tool adoption.

In reality, AI capability is constrained by the quality, structure, and accessibility of the data it relies on.

When customer data is fragmented across systems, when definitions are inconsistent, or when ownership is unclear, AI outputs become unreliable. Predictions feel vague. Recommendations lack context. Automation creates noise instead of clarity.

This is why many AI initiatives stall after initial pilots. The technology works, but the environment does not support it.

Why Data Foundations Matter More Than Models

AI systems do not reason in isolation. They synthesise patterns from existing information. If that information is incomplete or contradictory, the results reflect that.

Across Cloudsmiths’ work with enterprise clients, four data-related issues consistently undermine AI outcomes.

1. Fragmented data landscapes

Customer, product, and operational data often live in disconnected platforms. AI tools struggle to form a coherent picture when context is spread across CRM, ERP, data warehouses, and third-party systems.

2. Inconsistent definitions and metrics

When teams disagree on what constitutes a “customer”, an “active case”, or a “qualified lead”, AI outputs vary depending on which source is queried. Trust erodes quickly.

3. Poor data quality and governance

Outdated records, missing fields, and manual workarounds limit the usefulness of AI-driven insights. Without governance, models amplify inaccuracies at scale.

4. Limited real-time access

Many AI use cases depend on timely information. Batch updates and delayed pipelines reduce the relevance of recommendations and predictions. AI does not solve these problems. It magnifies them.

The Cost of Skipping the Data Layer

When organisations introduce AI before addressing data foundations, the impact is rarely neutral.

Common consequences include:

- declining trust in AI recommendations

- low adoption by frontline teams

- increased manual verification

- inconsistent customer experiences

- higher operational complexity

- disappointing return on investment

In some cases, teams respond by adding more AI tools, assuming the issue lies with the model choice. This often deepens the problem rather than solving it.

What “AI-Ready Data” Actually Means

AI-ready data is not about perfection. It is about reliability, consistency, and accessibility.

In practice, this means:

- a unified view of key entities such as customers, accounts, and products

- clearly defined ownership of critical datasets

- consistent metrics across systems

- governed data pipelines with visibility and accountability

- integration between operational platforms and analytics environments

- the ability to surface relevant data in real time

These foundations allow AI systems to operate with context rather than guesswork.

Where Cloud, CRM, and Data Strategy Converge

AI success sits at the intersection of cloud infrastructure, data engineering, and business platforms like CRM. Decisions made in one area directly affect outcomes in the others.

For example:

- CRM implementations shape how customer data is captured and structured

- Cloud architecture determines scalability, latency, and cost

- Data platforms define how information is integrated, transformed, and governed

AI sits on top of this stack. If the layers below are misaligned, AI struggles to deliver consistent value.

This is why AI initiatives increasingly fail when treated as standalone projects rather than part of a broader data strategy.

How Organisations Should Sequence AI Investment

The organisations seeing the strongest AI outcomes tend to follow a similar sequence.

- Stabilise data foundations

Focus on data quality, integration, and governance before expanding AI use cases. - Align platforms and workflows

Ensure CRM, data platforms, and operational systems reflect how teams actually work. - Introduce targeted AI use cases

Start with high-impact, low-risk applications where context is clear and value is measurable. - Build trust through consistency

Reliable outputs drive adoption more than advanced features. - Scale with intent

Expand AI usage once confidence and maturity are established.

This approach reduces risk and improves long-term return.

The Role of Cloudsmiths

Cloudsmiths helps organisations approach AI from the foundation up. Our work focuses on aligning data, platforms, and cloud architecture so AI can operate with clarity and confidence.

We support teams by:

- assessing data readiness before AI deployment

- designing unified data architectures

- integrating CRM, cloud, and analytics platforms

- establishing governance frameworks

- identifying AI use cases that make sense operationally

AI delivers value when it is built on solid ground.

Where This Leaves Leaders

AI initiatives fail less often because of model limitations and more often because of foundational gaps. As AI becomes embedded across enterprise platforms, the organisations that succeed will be those that invest in data clarity first.

Before adding another AI capability, leaders should ask a simpler question:

Is our data ready to support the decisions we expect AI to make?

Cloudsmiths helps organisations answer that question, and build from there with confidence.

.png)